Ask a quality engineer about the biggest obstacle to deploying AI-powered inspection on their line, and the answer is almost always the same: data—specifically, the lack of it. Defects — the very things the system needs to learn — are rare by definition. A well-run production line might produce one bad part in every thousand. That means waiting months before you’ve collected enough defect images to train a model. By that time, the production batch has moved on, the part design may have changed, and the project has stalled.

Few-shot learning breaks this bottleneck. It’s the technical reason modern AI visual inspection systems can train an accurate defect model using as few as 5 labeled images per defect type, rather than the hundreds or thousands that conventional deep learning approaches require. This article explains how few-shot learning works, why it matters on the factory floor, and what it actually looks like in practice — including where the limits are.

Key Takeaways

- Few-shot learning enables AI defect models to train on as few as 5 images, eliminating the months-long data collection phase that stalls most traditional AI deployments.

- The technique utilizes pre-trained models built on years of vertical industry data, enabling AI to rapidly learn defect features from a small sample set.

- Sample quality matters more than quantity — a handful of well-annotated, representative images outperforms hundreds of poor-quality ones.

- Generative AI amplifies this value—using just one defective image, it can generate multiple variations of the defect, achieving similarity while remaining distinct.

Why “AI Needs Big Data” Is No Longer the Whole Story

The assumption that AI always needs thousands of labeled examples was accurate for early deep learning architectures. A ResNet-style convolutional neural network trained from scratch on an industrial defect classification task genuinely needs large datasets to generalize — one benchmark places the practical floor at around 1,000 labeled images per defect class for a standard supervised classification setup.

But the field has moved significantly. Two developments changed the equation for manufacturing in particular.

What 5 Images Actually Means in Practice

The “5 images” figure isn’t marketing shorthand — it’s a real threshold achievable under the right conditions. But it’s worth being precise about what those conditions are, because the answer helps illuminate the engineering behind systems like UnitX’s CorteX AI training platform.

The Quality Bar for Each Image

Five high-quality, well-lit, and precisely annotated images of a scratch defect on an aluminum housing will outperform fifty poorly captured, inconsistently labeled ones. “Quality” here means the defect is clearly rendered by the imaging system, the labeling accurately traces the defect boundary (pixel-level segmentation, not just a bounding box), and the images represent the natural variation of that defect type — different sizes, slight positional variation, and different stages of severity.

This is where the imaging system matters as much as the AI. OptiX’s software-defined lighting — with 32 independently controllable light sources that cycle through 50 illumination patterns per second — ensures that defect-capture images are as informationally rich as possible, making each of those 5 training samples far more valuable than images captured under fixed, single-direction lighting.

The Role of Defect Type Complexity

Five images is a practical lower bound for relatively consistent defect types: a scratch with predictable geometry, a surface pit with characteristic depth, or a color deviation on a coated surface. For highly variable, morphologically complex defects — like a weld spatter pattern that changes shape significantly with temperature — you may need more or need to supplement with synthetic data.

The training time is correspondingly fast. CorteX can train a new feature type in as little as 2 minutes, which means that as new defect patterns emerge on a production line, the model can be updated and redeployed within a single shift — not over weeks.

The Data Scarcity Problem: Why It Hits Manufacturing Harder

Manufacturing quality inspection faces a data challenge that is structurally different from most other AI domains. In consumer internet applications,data is continuously generated — user clicks, search queries, uploaded image. In a factory, defect images are produced only when something goes wrong, and the entire purpose of quality control is to make that happen as rarely as possible.

This creates a paradox: the better the manufacturing process, the harder it is to collect the training data needed to automate its inspection.

New Part Types and Model Changeovers

The problem intensifies with high-mix production. A Tier 1 automotive supplier running 40 different part numbers doesn’t have months of defect image history for each one. When a new part launches — or when an existing part design changes — the AI model needs to start essentially from zero. Without few-shot capability, that means a prolonged data collection period before the system reaches production-level accuracy. With few-shot learning, the same team can stand up a working model in the same week the part launches.

This represents a fundamental shift in how AI deployment timelines work in manufacturing. A system that requires 2,000 labeled images before it can operate cannot scale across an enterprise that changes product configurations every quarter.

Rare Defect Classes

Some defect types are catastrophic but genuinely rare — a crack in a structural component, for instance, or a contamination event that happens once in thousands of cycles. These defects are exactly the ones quality systems most need to catch, yet they may appear only once or twice in months of production.

Conventional deep learning simply cannot train on 2 examples. Few-shot learning can — particularly when paired with Flex-Gen, UnitX’s generative AI platform, which synthesizes additional training images from as few as 3 real samples.

Few-Shot Learning vs. Standard Deep Learning: A Comparison

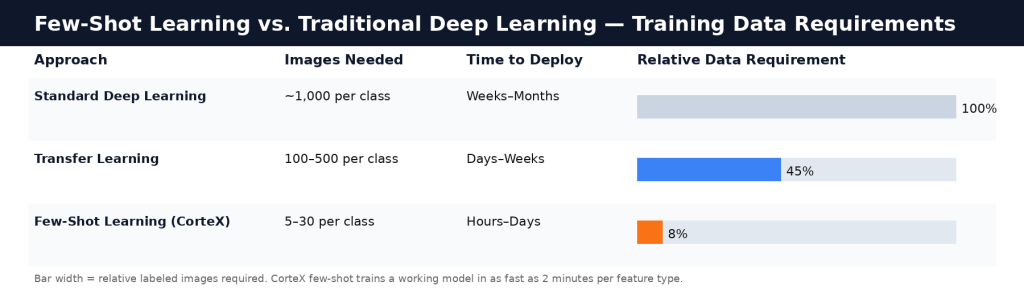

| Dimension | Standard Deep Learning | Few-Shot Learning |

| Minimum images per defect class | ~1,000 labeled images | 5–30 labeled images |

| Time to reach production accuracy | Weeks to months of data collection | Hours to days |

| Handles new part types | Requires retraining from scratch | Rapid model adaptation |

| Rare defect class support | Poor — insufficient training examples | Strong — designed for data scarcity |

| Dependence on data infrastructure | High — large labeled dataset required | Low — small annotated sets sufficient |

| Best suited for | High-volume, stable product lines with abundant defect history | High-mix lines, new product launches, and rare defect types |

Labeling Quality: The Variable That Determines Everything

If few-shot learning is about making every image count, labeling is where that value is created or destroyed. A mislabeled training image doesn’t just fail to help — it actively degrades model performance because the model learns to associate a feature with the wrong class.

Pixel-level Labeling — tracing the exact boundary of the defect rather than just drawing a box around the region — is what enables the deep learning segmentation model to learn precise shape and boundary characteristics. This granularity allows for threshold tuning: if you can define the exact pixel-level extent of a defect, you can set precise size thresholds that distinguish a cosmetic blemish (acceptable) from a structural crack (critical reject).

CorteX includes integrated labeling tooling specifically designed for factory engineers without data science backgrounds. The workflow is built around accuracy and consistency at small sample sizes — because in a few-shot regime, there is no massive volume of data to smooth over labeling errors.

Few-shot learning reduces the labeled image requirement from ~1,000 per class to 5–30, enabling manufacturers to deploy AI inspection without months of defect data collection.

Where Few-Shot Learning Reaches Its Limits

Few-shot learning is a powerful tool for solving the data scarcity problem — but it is not a universal substitute for larger datasets when data is genuinely available. There are scenarios where it faces real constraints.

High-Variance Defect Morphology

If a defect type has extremely high visual variability — a corrosion pattern that looks completely different at various humidity levels, for example — 5 images may not adequately represent that variability. The model may become overfit to the narrow examples it has seen, missing defect instances that fall outside the range it learned. In these cases, combining few-shot learning with generative synthetic data (as in FleX-Gen) is the practical solution: generate additional samples that extend coverage across the morphological range.

Class Imbalance and Confidence Calibration

A few-shot model trained on 5 scratch images and 5 pit images doesn’t inherently know that, in production, scratches may occur 20 times more often than pits. The model’s confidence calibration — how certain it needs to be before classifying something as a defect — may require tuning post-deployment as real production data accumulates. This is expected behavior, not a failure. CorteX’s threshold adjustment interface is designed specifically for this iterative calibration process.

The UnitX Applications Engineering team, which has overseen deployments across 190+ manufacturers, consistently observes that the most successful few-shot deployments treat the initial 5-image model as a starting point rather than a final product — deploying early, then refining with real production data as it becomes available.

Few-Shot Learning in Context: The Full Deployment Stack

Few-shot learning doesn’t operate in isolation. It’s one component of a broader system that makes rapid, accurate deployment possible. The workflow typically looks like this: OptiX captures high-fidelity images under controlled, software-defined illumination → CorteX labeling tools enable precise, pixel-level labeling → few-shot learning trains an initial model in minutes → the model is deployed to an inference engine running at up to 100 MP/s → production data feeds back into model refinement → FleX-Gen generates synthetic defect images to fill gaps for rare defect classes.

This is why the “5 images” claim is achievable in practice — not just in a research paper. It’s the result of the entire stack being engineered for data efficiency, not just the AI model itself.

Interested in how CorteX’s small-sample AI applies to your specific part type? Talk to UnitX experts — they can walk through what a realistic deployment timeline looks like based on your defect catalog and production volumes.

Frequently Asked Questions

How many images does few-shot learning actually need in manufacturing?

Practical few-shot learning for industrial defect detection typically operates in the 5–30 image range per defect class. The exact number depends on defect morphology complexity and labeling quality — a consistent, well-characterized defect type with precise pixel-level labeling can support a working model with as few as 5 examples, while more variable defect types benefit from more. The key difference from standard deep learning is that this range is orders of magnitude smaller than the 1,000+ images per class that conventional supervised training requires.

Is few-shot learning accurate enough for real production lines?

Yes, when paired with high-quality imaging and precise labeling. Few-shot learning models deployed in production environments at UnitX customer sites achieve accuracy levels suitable for critical defect detection — including near-zero false acceptance (FA) for structural defects. Performance is typically refined iteratively as real production data accumulates alongside the initial few-shot model. The system is not expected to be perfect on Day 1 with 5 images; it’s expected to be production-ready for the most critical defect classes, with improving precision over the first weeks of deployment.

Can few-shot learning handle multiple defect types simultaneously?

Yes. A CorteX model can be trained to recognize multiple defect classes — each with its own small set of annotated examples. The model learns discriminative features for each class simultaneously. The practical consideration is that each class should have at least 5 well-annotated, representative images. If certain rare defect classes have fewer than that, FleX-Gen can generate synthetic examples to bring each class up to the minimum threshold.

Explore CorteX’s small-sample AI training capabilities — or talk to UnitX experts to see how UnitX can inspect your specific part type.