The most common mistake manufacturers make when deploying AI visual inspection is treating each production line as an isolated project. They pilot on one line, prove ROI, and then discover that scaling to 10 or 20 lines requires re-engineering the system architecture from scratch: retraining models, re-integrating PLCs, and redeploying infrastructure that was never designed for factory-wide scale. Manufacturers can flexibly deploy and manage the lifecycle of ML models, scaling solutions across production lines and factories, but only if the architecture is designed for scale from the beginning.

The global machine vision market is projected to grow from $15.83 billion in 2025 to $23.63 billion by 2030, driven substantially by manufacturers scaling from single-line pilots to plant-wide and enterprise deployments. This guide provides the architectural blueprint, deployment sequence, and evaluation criteria for building an AI inspection system that scales from a single production line to a multi-product, multi-site operation without requiring a complete system rebuild at each expansion step.

Key Takeaways

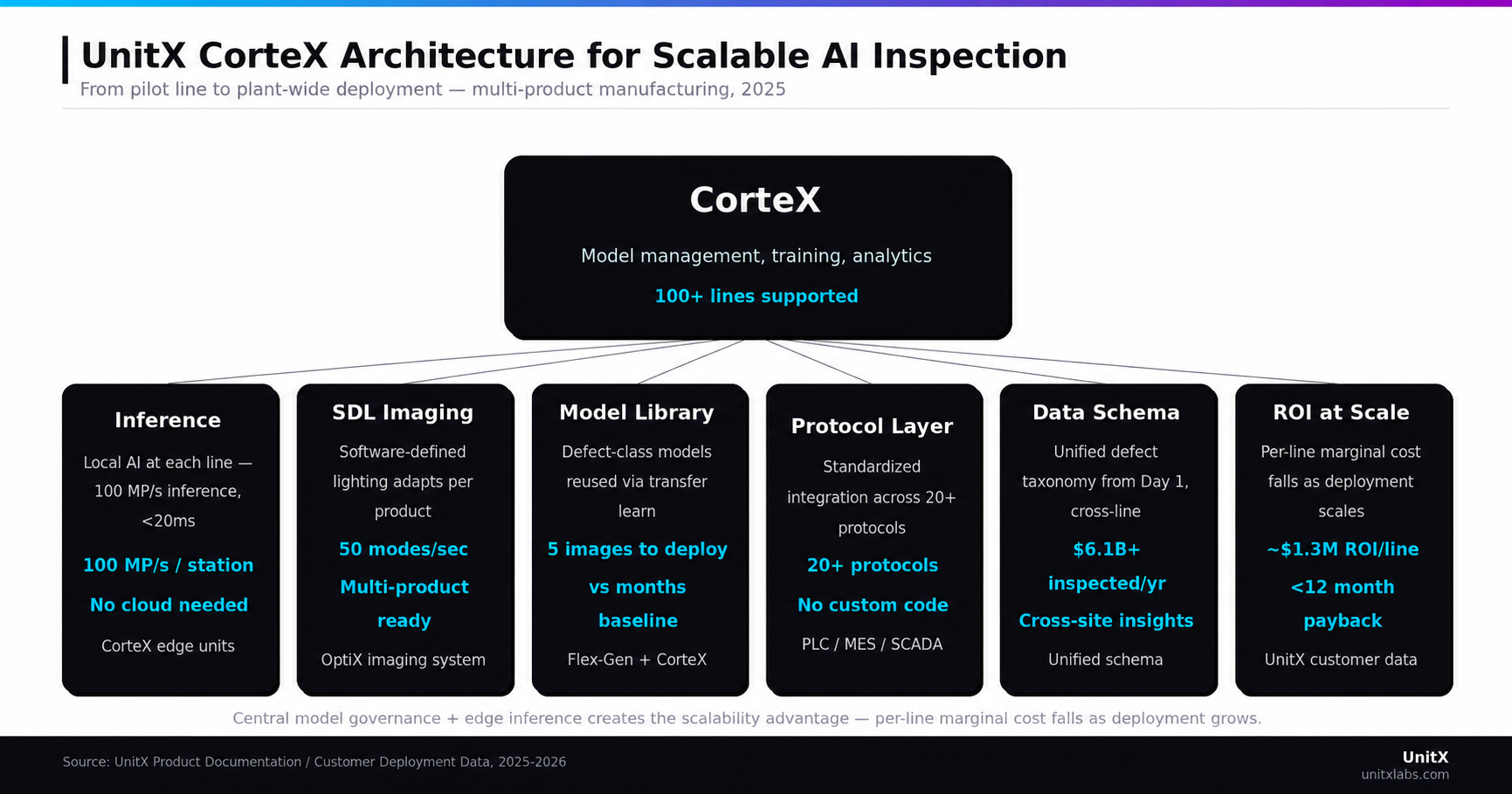

- Central-edge architecture is the foundation of scalability: centralized model management combined with edge inference enables “train once, deploy across all lines” without cloud latency penalties.

- Standardized hardware and protocol layers eliminate the re-integration cost that makes line-by-line scaling prohibitively expensive.

- Model reuse strategies: transfer learning, generated data, and cross-product model libraries, significantly reduce per-line AI development investment as scale increases.

- Data governance from Day 1 determines whether inspection data becomes a factory intelligence asset or a collection of isolated datasets that cannot be aggregated for process insight.

- Phased deployment: pilot, standardize, scale — is the only method that delivers predictable ROI at each stage while preserving the flexibility to adapt the architecture as production requirements evolve.

Why Single-Line AI Inspection Architectures Fail to Scale

The “One Model Per Product” Trap

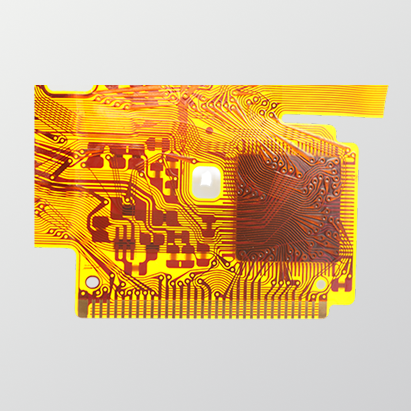

Handling multi-product inspection with a conventional approach required not just separate models, but also distinct annotation schemas and training pipelines for each product-class combination — compounding complexity with every new product added to the line. A manufacturer with 15 product families and 8 production lines faces a combinatorial explosion in model development and maintenance that consumes engineering resources faster than production scale grows.

The economic consequence is that inspection systems become a bottleneck to product diversification. When launching a new part variant requires 6–8 weeks of AI model development, quality engineering capacity limits how quickly the factory can respond to changes in customer demand. Traditional rule-based machine vision systems faced this problem acutely; AI systems can solve it — but only if they are designed to support cross-product model reuse from the architecture design phase.

Infrastructure Cost at Scale: What Changes Between Line 1 and Line 10

The costs of scaling AI inspection fall into three categories that differ significantly between Line 1 and Line 10. At Line 1, the dominant costs are model development (defect labeling and training), hardware procurement, and PLC integration. By Line 10, if the architecture is not designed for scale, model development costs multiply with each new line, integration costs repeat (because PLCs and MES interfaces vary by line), and system management overhead grows non-linearly as separate model versions diverge.

A properly designed central-edge architecture inverts this cost curve. UnitX’s central-edge architecture aggregates training and data management in a centralized system — enabling a “train once, deploy across all lines” approach, while supporting 20+ industrial protocols with no-code PLC integration. The per-line marginal cost of scaling drops significantly after the first standardized deployment because hardware configurations, model pipelines, and integration protocols are reused rather than rebuilt.

The Architecture Blueprint for Scalable Multi-Line AI Inspection

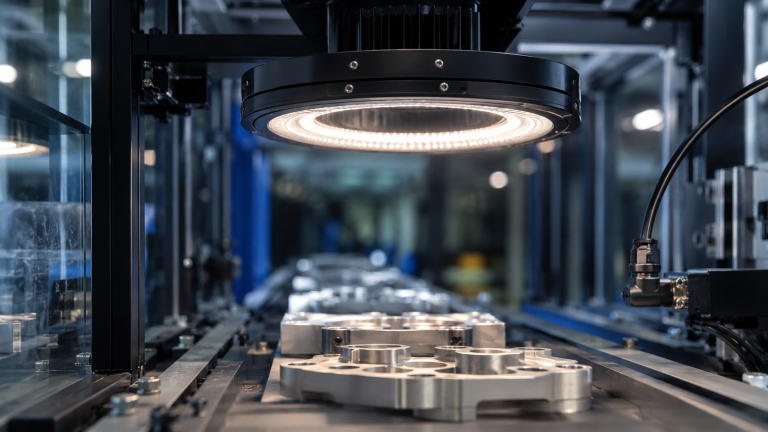

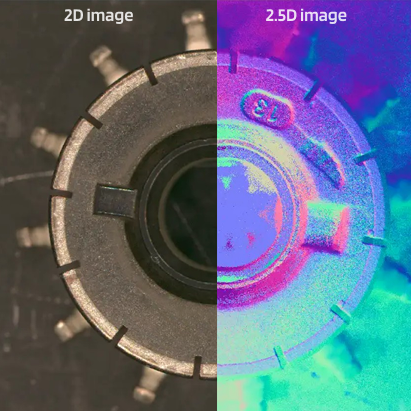

Layer 1 — Edge Inference: Line-Level AI at Production Speed

Edge inference is non-negotiable for multi-line AI inspection at production throughput. Cloud-dependent inference introduces network latency that breaks high-speed inspection timing requirements — at 100+ MP/s throughput, a 200 ms cloud round-trip creates a reject decision delay that forces either line slowdowns or buffer staging, both of which destroy the throughput case for AI inspection. Intel’s deployed edge AI inspection solution analyze machine vision images at the edge and can trigger alarms or stop the production tools in real time — a latency requirement that only local edge inference can satisfy.

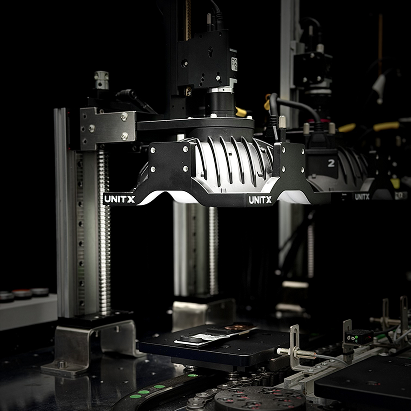

The edge compute architecture for scalable deployment requires standardization: identical hardware specifications across lines allow model deployment without revalidation for hardware compatibility differences. Each CorteX edge unit in a UnitX installation runs local inference at 100 MP/s throughput independently of network connectivity, ensuring that a network interruption at the central management layer does not degrade production-line inspection performance.

The CorteX AI visual inspection system is designed for this edge-first deployment model — processing inference locally at each station while synchronizing model updates, defect data, and performance telemetry to the central management layer on a configurable schedule.

Layer 2 — Central Management: Model Governance Across Lines

The central management layer is where scalability is either built or broken. A system that manages models as isolated, line-specific artifacts creates a maintenance burden that grows linearly with line count. A system designed for centralized model governance enables model updates to propagate across all relevant lines simultaneously, allows defect data from all lines to feed a shared model improvement pipeline, and supports cross-line performance comparisons that identify degraded detection accuracy before defect escapes increase.

Central model management requires a data schema that is consistent across lines and products: a design decision that must be made before the first line is deployed, not retrofitted after multiple lines are running with incompatible data formats. This includes standardized defect taxonomy, consistent labeling conventions, and metadata capture that enables cross-line analysis.

Layer 3 — Integration Protocol Standardization: PLCs, MES, and SCADA

Machine vision and PLC integration represents a replicable framework that supports intelligent manufacturing, but only when the protocol stack is standardized across lines. In practice, manufacturing facilities operate PLCs and MES systems from different providers at different protocol versions. A scalable AI inspection architecture must support a protocol abstraction layer that translates between AI inference outputs and whichever PLC/MES combination used on each line.

The integration standardization decision has a direct cost impact: facilities that standardize hardware and protocol stacks curing the pilot phase can deploy subsequent lines in days rather than weeks. Facilities that treat each line as a custom integration project incur full integration engineering costs with every new deployment.

Layer 4 — Model Reuse: Cross-Product Transfer Learning

The most powerful lever for reducing per-line AI development cost in multi-product facilities is model reuse through transfer learning. When an AI model trained on Product A has learned general surface defect features — such as scratches, contamination, and coating anomalies — that knowledge can transfer to Product B with relatively little incremental training, even when the parts differ substantially.

Practical model reuse requires managing a model library organized by defect class rather than by product. For example, a “surface scratch” model library captures scratch detection generalizations across metal, plastic, and ceramic parts, and can be fine-tuned to a new part with a fraction of the training data required to build a model from scratch. FleX-Gen’s generated data generation amplifies this further — when a new product has only 3 real defect samples, FleX-Gen can generate the training volume needed to fine-tune a transfer-learned model to production accuracy, compressing new product launch timelines from months to days.

Model governance combined with edge inference creates the decisive scalability advantage — marginal per-line costs decrease as the deployment expands.

Phased Deployment: From Pilot Line to Plant-Wide Scale

Phase 1 — Pilot Line: Prove ROI and Establish Standards (Weeks 1–7)

The pilot deployment has two purposes: proving the ROI case for the plant’s specific production conditions and establishing the architectural standards that all subsequent lines will follow. The ROI proof is straightforward — document the baseline defect escape rate and false rejection rate, deploy the AI system, and measure performance against the baseline after stabilization.

The architectural standards work is less visible but more important: every protocol, hardware configuration, data schema, and model management decision made during the pilot becomes the template for all subsequent lines.

UnitX’s deployment methodology achieves Site Acceptance Testing (SAT) in as few as 5 days and full production deployment within 7 days for single-line installations, with model training completing in approximately 30 minutes from labeled data (UnitX customer data, 2025). These timelines are achievable because the system architecture is designed for rapid deployment — not because corners are cut on validation.

Phase 2 — Standardization: Documenting the Repeatable Blueprint (Weeks 7–10)

Before scaling, document every architectural decision from the pilot: hardware configuration, lighting setup parameters, software version, PLC integration protocol, MES data schema, model structure, and performance baseline. This documentation becomes the deployment runbook for all subsequent lines — the asset that allows the 10th line to deploy in days rather than the weeks the first line required.

UnitX’s AI visual inspection system includes deployment documentation and configuration management tools that support this standardization objective.

Phase 3 — Scaled Rollout: Leveraging the Repeatable Architecture (Ongoing)

With a validated pilot and a documented deployment blueprint, scaled rollout follows a predictable pattern: replicate hardware configuration, push the model from central management to the new edge unit, configure PLC integration using the standardized protocol layer, and validate performance against the pilot baseline specification.

Each new line benefits from models pre-trained on the growing cross-product defect library, progressively reducing model development time as the library expands.

Multi-site deployments follow the same pattern, extended to a regional or global level — central model management serves multiple plant-level installations, each running edge inference independently while contributing defect data back to the central improvement pipeline. This architecture enables a manufacturer’s best quality engineering insights to propagate across all sites simultaneously through model updates, rather than remaining siloed in the specific plant where it was learned.

Six Architectural Decisions That Determine Multi-Line Scalability

The following decisions have an outsized impact on how efficiently AI inspection scales beyond the first production line.

| Decision | Scalable Choice | Anti-Pattern |

| Inference location | Edge-first — inference at each line, model management central | Cloud-only inference (latency, dependency) |

| Hardware standardization | Identical specs across all lines — same cameras, compute, lighting | Custom hardware per line (revalidation cost) |

| Model organization | Defect-class library — reuse across products via transfer learning | One model per product-line combination |

| Data schema | Unified taxonomy across all lines from Day 1 | Line-specific data formats (no cross-line analysis) |

| PLC integration | Protocol abstraction layer supporting 20+ vendor protocols | Custom integration per PLC vendor (re-engineering) |

| Model update mechanism | Central push to all edge units — validated before deploy | Manual update per line (drift between versions) |

Frequently Asked Questions

How do you train AI inspection models when the same production line runs multiple product types?

The most effective approach is to maintain a defect-class model library organized by defect types rather than by products. Surface scratch detection, for example, shares learned features across metal, plastic, and ceramic parts. When a new product is introduced on the line, transfer learning from the existing scratch detection model requires far fewer labeled images than building a new model from scratch.

FleX-Gen’s synthetic data generation further reduces the labeled image requirement to as few as 3 real defect samples per defect class, enabling new product qualification in days rather than weeks even when the new part includes rare defect types.

What is the right balance between edge inference and cloud model management for a 20-line facility?

For production-line inference decisions: pass/fail, reject triggering — edge inference must be the architecture because cloud latency is incompatible with high-speed line timing. Cloud or centralized infrastructure is appropriate for model training, version management, cross-line performance analytics, and model update distribution.

The practical architecture is: edge compute at each line for inference, and a central server (on-premises or private cloud) for model management and analytics, with a secure update mechanism that pushes validated model versions to edge units during scheduled maintenance windows. For a 20-line facility, the central server should be sized to aggregate telemetry from all lines and manage the model lifecycle across the full deployment, not for real-time inference support.

How do you maintain model performance consistency across lines as production conditions change?

Performance consistency requires three mechanisms: (1) standardized illumination to eliminate lighting drift, which is the most common cause of model performance degradation over time, (2) continuous performance monitoring to alert quality engineers when a line’s detection rate or false rejection rate deviates from its validated baseline, and (3) a retraining pipeline that incorporates new production defect samples and pushes updated models to affected lines within hours rather than weeks. The UnitX resources center includes deployment guidance on performance monitoring protocols for multi-line installations.

What MES integration does a scalable multi-line AI inspection system require?

Minimum MES integration for a scalable system includes: real-time reject signaling to the production control system (PLC-level), part traceability linkage (defect record associated with a unique part identifier), defect trend data export to MES for quality reporting, and a bidirectional interface that allows MES process parameter data to inform inspection sensitivity adjustments.

The key scalability requirement is that this integration uses a standard protocol (OPC-UA, Modbus, MQTT, or a supported industrial protocol), rather than a proprietary interface that must be rebuilt for each MES or PLC upgrade. The strategic shift toward predictive quality assurance requires MES integration that feeds inspection data back into process control — not just a reporting output.