The AI visual inspection market in 2026 is no longer a niche — it is a competitive landscape with clear performance tiers. The challenge for quality engineers and procurement teams is separating systems built on genuine deep learning capabilities from those that simply rebrand legacy rule-based machine vision with an “AI” label. This guide evaluates leading systems based on the criteria that determine real production outcomes: inspection accuracy, data efficiency, cycle time, MES integration, and deployment speed. Understanding these distinctions before issuing an RFQ can save three to six months of evaluation time.

Key Takeaways

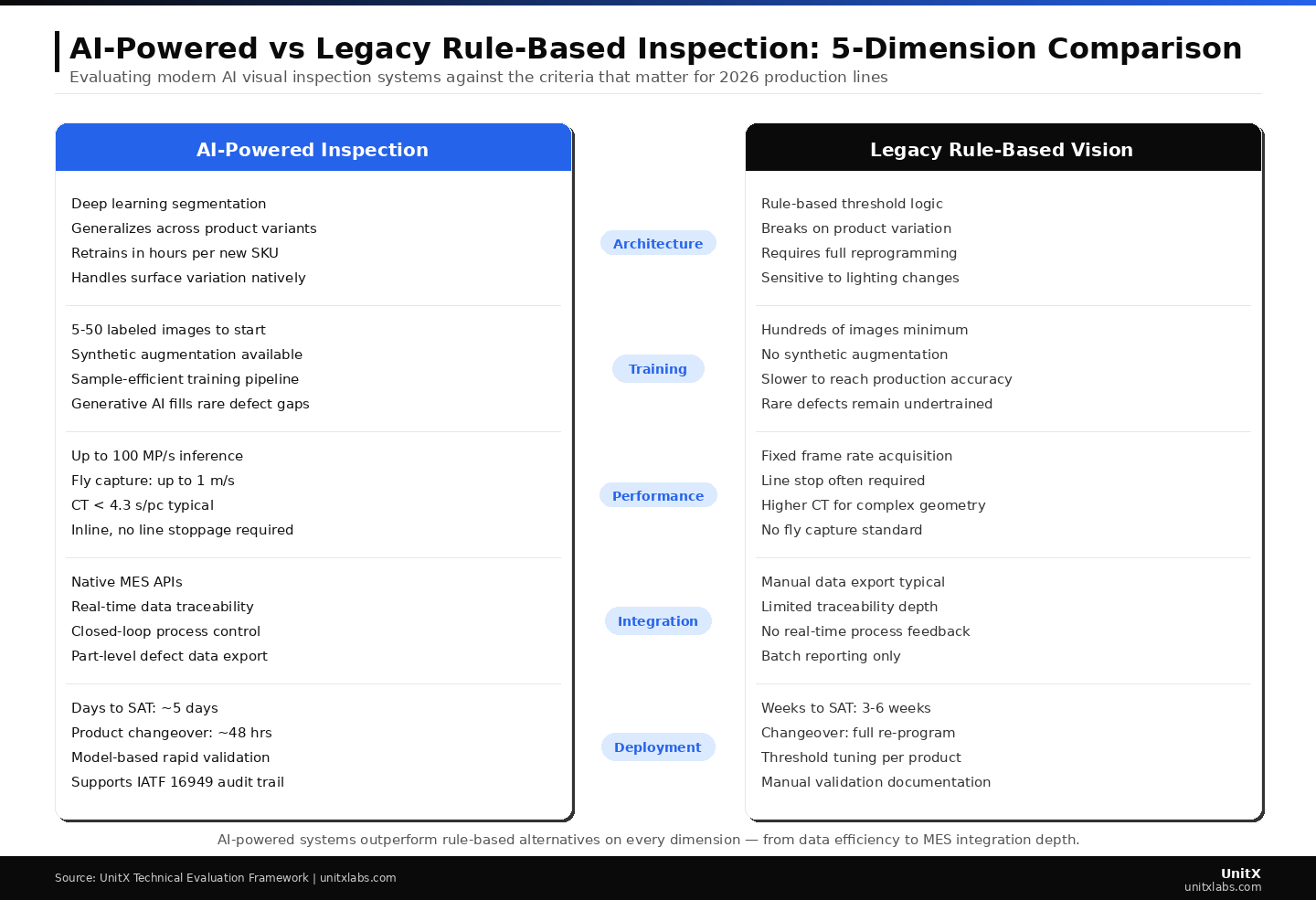

- The most important distinction in 2026 is architecture: genuine deep learning segmentation vs. rule-based threshold logic marketed as “AI-powered.”

- Sample efficiency: the minimum number of images required to begin training, is the most practical early differentiator for teams with limited defect image libraries.

- MES integration depth separates systems that close the quality loop from those that function as isolated pass/fail gates.

- Days-to-SAT is a reliable indicator of a system’s true generalization capability; rule-based systems require weeks of threshold tuning per product.

- Total cost of ownership (TCO) over three years almost always favors AI-first systems, even when the initial acquisition cost is higher, because product changeover costs are dramatically lower.

How to Evaluate AI Visual Inspection Systems: The Five Criteria That Matter

Criterion 1 — Model Architecture: Deep Learning Segmentation vs. Rule-Based Logic

The most consequential question in any evaluation is whether the system uses genuine deep learning — specifically, deep learning segmentation — or rule-based threshold logic. The distinction is not semantic. Rule-based systems check whether image regions exceed defined threshold values. They are fast to configure for a stable product but require complete reconfiguration for every product variant. Deep learning segmentation models learn the statistical distribution of defect appearance from labeled examples and generalize to unseen variants. They handle surface finish variation, orientation changes, and minor lighting shifts without reconfiguration.

According to McKinsey’s manufacturing AI research, deep learning inspection systems reduce product changeover costs by 60–80% compared to rule-based systems over a three-year horizon, driven primarily by eliminating threshold re-engineering for new SKUs. Systems like CorteX deliver deep learning segmentation with pixel-level defect boundary measurement — a capability that rule-based systems cannot replicate.

Criterion 2 — Data Efficiency: Sample-Efficient Training

The practical ability to train a reliable model from a small number of labeled images determines how quickly a system reaches production readiness — and how expensive product expansion becomes. Systems that require hundreds of images per defect class create a bottleneck: you either wait months to collect rare defects, or you go live undertrained and accept elevated FA. Systems that can train production-ready models from as few as five labeled images per defect class — using sample-efficient deep learning architectures — fundamentally change the deployment economics.

Generative AI augmentation further reduces data requirements by generating statistically valid synthetic defect images from limited real examples. This capability is not universal across vendors and should be explicitly tested during evaluation. Published research on synthetic data augmentation shows that well-validated synthetic data can achieve accuracy equivalent to training on datasets 3–5× larger.

Criterion 3 — Inspection Speed: CT and Fly-Capture Capability

Cycle time (CT) compliance is a binary requirement: the inspection system either fits within the production line’s available CT budget, or it creates a bottleneck. Most production applications require CT below 5 seconds per part; high-throughput lines require sub-second CTs. Fly-capture capability — the ability to inspect parts moving at line speed without stopping — is essential for many continuous-flow applications. OptiX’s fly capture supports inspection at up to 1 m/s conveyor speed, eliminating the need for stop-and-inspect stations that disrupt flow.

Maximum throughput varies across systems. The UnitX platform supports up to 1,200 parts per minute at full throughput with multiple inspection stations. Verify CT specifications under your exact lighting and part geometry conditions during POC — not just from datasheet claims.

Criterion 4 — MES Integration: Real-Time Data Traceability

An inspection system that operates as an isolated pass/fail gate generates scrap data. An inspection system with native MES integration generates actionable process intelligence. Over a three-year deployment, this difference determines whether you simply maintain quality or continuously improve it. Evaluate MES integration on three dimensions: protocol support (e.g. OPC-UA, MQTT, REST, or proprietary); latency (real-time vs. batch export); and data depth (defect class, confidence, image, and upstream process linkage vs. simple pass/fail output).

According to Gartner’s analysis of AI in manufacturing, closed-loop quality control — where inspection results feed directly into process adjustment — is the highest-ROI application of AI in manufacturing, delivering a 20–35% reduction in defect rates within 12 months.

Criterion 5 — Deployment Speed: Days-to-SAT as a Capability Proxy

Site Acceptance Testing (SAT) duration is an underused evaluation metric that reveals more about a system’s real capability than any benchmark. A system that completes SAT — with verified FA=0% and FR ≤ 1% on live parts — in five days demonstrates true generalization. A system that requires three to six weeks of threshold tuning to reach the same specification is, by definition, a rule-based system, regardless of how it is marketed. Request documented SAT completion records from reference customers during your evaluation, not just vendor-supplied benchmark.

AI-powered systems outperform rule-based alternatives across every dimension critical to 2026 production environments. The performance gap is widest in deployment speed and product changeover cost — the two factors that ultimately determine total cost of ownership over a three-year production horizon.

The 2026 AI Visual Inspection System Landscape: Architecture Tiers

The market in 2026 has consolidated into three recognizable tiers based on the sophistication of the underlying technology.

Tier 1 — Full-stack AI inspection platforms combine purpose-built, software-defined imaging hardware, sample-efficient deep learning segmentation, generative AI augmentation, and native MES integration into a single validated system. These platforms are designed for inline production at scale and support product changeovers without re-engineering. UnitX’s FleX platform represents this tier — purpose-built for 100% inline inspection on high-mix manufacturing lines. Tier 1 systems typically achieve SAT in five days, support fly capture, and include real-time data traceability from day one.

Tier 2 — AI-enhanced traditional machine vision systems integrate deep learning modules on top of existing rule-based inspection platforms. These systems offer improved accuracy over purely rule-based systems but retain architectural constraints: separate illumination controllers, limited generalization, and slower product changeovers compared to Tier 1 systems. They represent a reasonable upgrade path for facilities with existing machine vision infrastructure, but do not deliver Tier 1 performance benchmarks.

Tier 3 — Rule-based systems with AI labeling apply neural network terminology to fundamentally threshold-based inspection logic. These systems struggle with product variation, require extensive re-engineering for new parts, and cannot support fly capture at competitive CT. Identifying Tier 3 systems requires asking directly: “Can your system train on fewer than 50 images per defect class without manual threshold calibration?” A “no” or hedged answer indicates a Tier 3 architecture.

Evaluation Scorecard: How to Score Systems Objectively

| Criterion | Weight | What to Measure | Minimum Threshold |

| Model architecture | 30% | Deep learning segmentation vs. rule-based | Must be DL segmentation |

| Data efficiency | 20% | Images required to start training per class | <50 images per class |

| Inspection CT | 20% | CT at production throughput rate | <4.3 s/pc standard; fly capture if required |

| MES integration | 15% | Real-time protocol, data depth | Real-time traceability, part-level data |

| Days to SAT | 10% | Verified SAT duration on customer reference | <10 days |

| Changeover time | 5% | Hours to switch inspection model per new part | <48 hours without re-engineering |

Key Vendor Segments: What to Know Before Your RFQ

Full-Stack AI Inspection Specialists

Vendors that have built their entire stack — imaging hardware, AI training pipeline, inference engine, and MES connectors — around a unified architecture for inline industrial inspection typically offer the strongest performance and lowest total cost of ownership (TCO) for high-mix, high-volume manufacturing. Their trade-off is reduced flexibility for highly customized applications requiring non-standard imaging modalities. UnitX’s AI-powered inspection solutions are deployed by Top 5 Tier 1 automotive suppliers and Top 2 EV battery manufacturers — a reference base that confirms production-validated performance at scale.

General-Purpose Machine Vision with AI Modules

Large machine vision companies that have added deep learning modules to established rule-based platforms offer broad product portfolios and strong global service networks. Their AI capabilities vary; some have invested heavily in true deep learning R&D, while others have added neural network classifiers onto rule-based cores. Evaluating them requires the same architecture questions as any other vendor — the brand name alone does not guarantee the technology tier.

Startup and Pure-Software AI Vendors

Software-only AI inspection vendors provide model training and inference tools that run on customer-supplied cameras and computers. Their strength is flexibility and often lower software acquisition cost. Their limitation is that, without hardware integration, image quality control becomes the customer’s responsibility — and as Strategy 1 of this guide makes clear, lighting variance is a primary driver of elevated FR. Evaluate software-only vendors with a realistic assessment of your in-house imaging hardware capabilities. UnitX’s technical blog covers the hardware-software integration requirements in detail for teams considering hybrid approaches.

Industry-Specific Considerations for 2026

Automotive and Tier 1 Suppliers

IATF 16949 compliance is a non-negotiable requirement for automotive quality systems. Any AI visual inspection system must be capable of generating the traceability records, measurement system analysis (MSA) documentation, and statistical process control (SPC) outputs required for audits. Verify compliance documentation before POC, not after. UnitX’s automotive AI inspection solutions are validated against IATF 16949 requirements and deployed across powertrain, chassis, and body components. IATF 16949 compliance requirements provide the definitive reference for audit documentation standards.

Battery Manufacturing and the EV Supply Chain

Battery cell inspection presents unique challenges: electrode surfaces require 2.5D imaging for tab geometry validation, and defect tolerances are measured in microns rather than millimeters. Fly capture at line speed is typically required. Systems that meet automotive surface inspection specifications may not meet battery electrode inspection requirements — validation through POC on actual cell formats is essential. UnitX’s battery inspection solutions achieve 3μm z-axis repeatability on electrode surfaces — validated on EV battery production lines for Top 2 EV manufacturers.

Semiconductor and Advanced Packaging

Semiconductor inspection combines the highest resolution requirements (up to 50 MP per image) with the most demanding CT constraints in manufacturing. Deep learning segmentation on high-resolution images requires inference hardware capable of 100 MP/s throughput to stay within production CT budgets. UnitX’s semiconductor inspection platform is designed for this intersection of resolution and speed.

Frequently Asked Questions

How do I distinguish genuine AI inspection from rule-based systems marketed as AI?

Ask the vendor to demonstrate training a new defect class from scratch during your evaluation, starting with fewer than 20 labeled images and without any manual threshold calibration. A genuine deep learning system can do this; a rule-based system cannot, regardless of the marketing language. Also request documented SAT completion records showing days-to-SAT on a reference customer with a comparable product mix. Reviewing independent case studies with verified customer contacts is the most reliable way to differentiate.

What is the realistic ROI timeline for AI visual inspection in 2026?

For high-mix production lines (10+ part numbers), ROI from reduced product changeover costs and lower scrap typically achieves payback in 12–18 months. For low-mix, high-volume lines with stable products, payback is often 18–24 months, with the primary driver being FA=0% quality escape elimination. According to McKinsey’s operations research, AI quality control systems achieve an average 18-month payback across a broad manufacturing sample.

Should we evaluate AI visual inspection systems on our own line or in a vendor lab?

Always evaluate on your own line, using your own parts, under your actual production lighting and throughput conditions. Lab evaluations with benchmark parts systematically overstate performance because they use optimal surface conditions, controlled lighting, and a limited defect taxonomy. Request a Proof of Concept (POC) in your production environment with clearly defined success criteria: such as documented FA and FR results on a minimum of 1,000 production parts.

How many systems should we evaluate simultaneously?

Two to three simultaneous POCs is the practical maximum for most quality engineering teams. Fewer than two limits your ability to compare; More than three creates an evaluation burden that can compromise the quality of each assessment. Structure each POC with identical evaluation criteria and a common scoring rubric before engaging vendors, so comparisons remain objective rather than influenced by presentation quality.