Image transformation machine vision system refers to the process of changing an image’s appearance to make it suitable for analysis by computers. In computer vision, these changes can include shifting, rotating, or scaling images using methods like affine transformations and homographies. Mastering image transformation machine vision system increases the accuracy and reliability of computer vision models, allowing them to detect and recognize objects even when their orientation or size changes. Proper use of these techniques leads to faster and more precise results in computer vision tasks, with modern AI-driven inspection systems often achieving error rates below 1%.

Key Takeaways

- Image transformation changes images to help computers analyze them better by adjusting position, size, orientation, brightness, and contrast.

- Combining geometric and photometric transformations improves defect detection and object recognition in manufacturing and automation.

- Advanced sensors like CMOS capture clearer images, enabling faster and more accurate machine vision results.

- Common techniques include filtering, morphological operations, and feature extraction to reduce noise and highlight important details.

- Beginners should start with simple projects using tools like OpenCV, practice basic concepts, and gradually explore advanced methods.

Image Transformation in Machine Vision Systems

What Is Image Transformation?

Image transformation in a machine vision system means changing an image so that a computer can analyze it more effectively. In machine vision, these changes often involve two main types: geometric and photometric. Geometric transformations adjust the position, size, or orientation of objects in an image. For example, a system might rotate, flip, or scale an image to help a computer vision model recognize an object from different angles. Photometric adjustments change the brightness, contrast, or color of an image. These changes help the image processing machine vision system handle different lighting conditions and improve the clarity of important features.

Sensors play a key role in this process. Most modern machine vision systems use CMOS sensors instead of older CCD sensors. CMOS sensors provide higher contrast, better dynamic range, and lower noise. This means the image transformation machine vision system can capture clearer and more detailed images, even when objects move quickly or lighting changes. These improvements help the image processing machine vision system deliver more accurate results in real time.

Note: Both geometric and photometric transformations are essential for preparing images in automated environments. They help align images, making it easier for computer vision and machine vision systems to extract useful data.

How It Works

The image transformation machine vision system follows a series of steps to prepare images for analysis. First, the system captures an image using a sensor, usually a CMOS sensor. The image processing machine vision system then applies geometric transformations, such as translation, rotation, and scaling. These steps change the location and orientation of pixels, allowing the system to recognize objects from different viewpoints.

Next, the system uses photometric adjustments to modify the image’s brightness, contrast, and color. This step helps the computer vision model handle images taken under different lighting conditions. The image processing machine vision system may also use filtering techniques, such as Gaussian filters for noise reduction or Sobel filters for edge detection. Morphological operations, like erosion and dilation, help clean up the image by removing small details or filling gaps.

Here are some common types of image transformation used in machine vision systems:

- Filtering Techniques

- Gaussian Filter: Smooths the image and reduces noise.

- Sobel Filter: Detects edges.

- Histogram Equalization: Improves contrast.

- Morphological Operations

- Erosion: Shrinks object boundaries.

- Dilation: Expands object boundaries.

- Opening: Removes small objects.

- Closing: Fills small holes.

- Feature Extraction Techniques

- Shape-Based Features: Measures area and perimeter.

- Texture Analysis: Examines pixel patterns.

- Color-Based Features: Looks at color information.

- Histogram-Based Features: Studies pixel intensity.

The image transformation machine vision system uses these steps to align images and prepare them for further analysis. This process improves the accuracy of computer vision models and helps machine vision systems make better decisions. Modern image processing techniques, such as those found in hybrid models, have shown significant improvements in image alignment accuracy. These improvements lead to higher image quality and better results in automated environments.

Machine vision systems rely on these transformations to ensure that images are ready for tasks like inspection, sorting, and quality control. By using advanced sensors and powerful image processing, these systems can handle complex tasks in manufacturing and other industries. The combination of geometric and photometric changes, along with modern sensor technology, allows machine vision and computer vision systems to deliver reliable and efficient results.

Why Image Transformation Matters

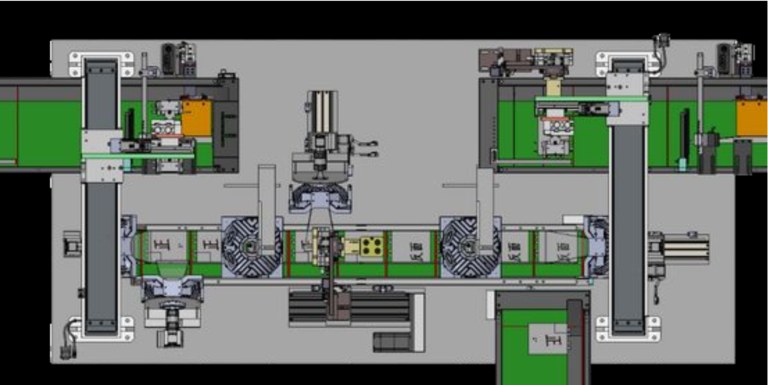

Role in Manufacturing

Image transformation plays a critical role in manufacturing. Machine vision systems use these techniques to improve inspection accuracy and efficiency. When factories produce thousands of parts each day, even small defects can cause big problems. Machine vision helps detect defects early, reducing waste and saving money. Automated visual inspection systems use image transformation to spot tiny cracks, scratches, or color changes that human eyes might miss.

Manufacturing relies on industrial image processing to create diverse and realistic images for training. Synthetic data augmentation, such as changing color, brightness, or orientation, increases the variety of images. This diversity helps machine vision systems recognize defects in different lighting or positions. Proper annotation and feedback loops further improve defect detection rates. Generative models, like GANs, create realistic defect images from defect-free samples. These methods address the challenge of limited defect data in manufacturing applications.

Tip: Standardizing imaging conditions while keeping enough variation in datasets ensures consistent defect detection performance.

Industry studies show that AI-driven visual inspection systems outperform human inspectors. These systems detect defects in real time, leading to faster inspection and consistent quality control. Companies report up to 99% defect detection accuracy and 25% faster part inspection. Automated visual inspection also reduces human error, lowers labor costs, and maintains high product standards.

Benefits for Automation

Machine vision systems transform manufacturing by enabling real-time processing and decision-making. Automated systems use image transformation to extract features quickly and accurately. This process allows for immediate responses during inspection, sorting, and quality control. Industrial image processing highlights important areas, making it easier to find defects and ensure enhanced quality control.

Machine vision supports improved safety and accuracy in automated environments. AI-powered systems learn from large datasets, improving object recognition and anomaly detection. These systems reduce false positives and negatives, leading to enhanced quality control. Companies see measurable benefits, such as 30–50% less machine downtime, 10–30% higher throughput, and 15–30% better labor productivity.

Efficient data extraction is another key benefit. Image transformations like resizing, normalization, and noise reduction standardize raw data. This standardization improves model accuracy and reduces training time. Machine vision systems achieve high accuracy and scalability, making them essential for modern manufacturing. Industrial image processing ensures that inspection applications run smoothly, supporting a wide range of manufacturing needs.

Types of Image Processing Techniques

Modern machine vision relies on several image processing techniques to prepare images for analysis. These methods help computer vision systems recognize objects, detect defects, and perform accurate segmentation. Below are the main categories of image processing used in industrial and manufacturing environments.

Geometric Transformations

Geometric transformations change the position, size, or orientation of objects in an image. These image processing techniques include affine transformations, which preserve points, straight lines, and planes. The most widely used geometric transformations in industrial image processing are:

- Rotation: Rotates images by specific angles to simulate different viewpoints.

- Flipping: Mirrors images horizontally or vertically to handle symmetry.

- Scaling: Resizes images to simulate variations in object size.

- Translation: Shifts images along axes to detect objects in different positions.

Affine transformations like rotation and scaling play a key role in data augmentation. They help computer vision models handle real-world variations, improving accuracy and robustness. Advanced methods, such as Radon and Fourier–Mellin transforms, convert rotation and scaling into translation and amplitude changes. This approach avoids errors from binarization and normalization, leading to higher classification accuracy and better noise resistance.

In manufacturing, geometric transformations allow computer vision systems to recognize parts regardless of their orientation or size, supporting reliable image segmentation and inspection.

Photometric Adjustments

Photometric adjustments modify the brightness, contrast, and color of images. These image processing techniques help computer vision systems adapt to different lighting conditions. Common photometric adjustments include:

- Brightness adjustment to simulate various exposure levels.

- Contrast augmentation to highlight differences in image windowing.

- Color distortions, such as changes in hue and saturation, for color images.

Photometric imaging systems capture high-resolution images with more gray levels. This enables detection of subtle defects that standard machine vision might miss. Techniques like photometric stereo use multiple light directions to reveal 3D surface features, improving defect detection and segmentation. These adjustments enhance operational efficiency and product quality in high-value manufacturing.

| Aspect | Explanation |

|---|---|

| Technique | Photometric stereo analyzes reflections to separate 3D shape from texture. |

| Image Quality Impact | Enhances contrast, revealing subtle features and defects. |

| Surface Analysis | Estimates surface normals for better segmentation and defect detection. |

Filtering and Enhancement

Filtering and enhancement are essential image processing techniques for noise reduction and feature improvement. These methods make important details stand out, supporting accurate computer vision analysis. Common filtering and enhancement methods include:

- Median Filtering: Removes salt-and-pepper noise by replacing each pixel with the median of its neighbors.

- Bilateral Filtering: Reduces noise while preserving edges.

- Frequency Divided Filter (FDF) and 3D Multi-process Noise Reduction (3D-MNR): Improve clarity under low illumination.

Other advanced filters, such as BM3D and anisotropic diffusion, balance noise removal and detail preservation. Enhancement techniques like histogram equalization and normalization standardize image data, making features more visible. These image processing techniques improve tasks like defect detection, object recognition, and image segmentation.

Filtering and enhancement increase the reliability of computer vision systems by reducing noise and highlighting critical features.

Morphological Operations

Morphological operations refine image shapes and structures, making them vital for segmentation and object recognition. These image processing techniques use structuring elements to process binary or grayscale images. The primary morphological operations include:

| Morphological Operation | Description | Typical Applications |

|---|---|---|

| Dilation | Adds pixels to object boundaries, enlarging features | Connecting broken parts, filling gaps |

| Erosion | Removes pixels from object boundaries, shrinking features | Removing noise, isolating elements |

| Opening | Erosion followed by dilation; removes small objects/noise | Cleaning images, preserving main structures |

| Closing | Dilation followed by erosion; fills holes, connects edges | Sealing cracks, improving shape consistency |

| Morphological Gradient | Highlights object boundaries | Edge detection, boundary refinement |

Morphological operations enhance segmentation by refining shapes, reducing noise, and improving boundary accuracy. Combining opening and closing eliminates noise and bridges gaps in segmented objects. Selecting the right structuring element based on object geometry further improves segmentation precision.

Morphological operations are fundamental in image processing for accurate image segmentation, quality control, and automated inspection.

Machine Vision Workflow

Image Acquisition

Machine vision systems begin with image acquisition. Cameras equipped with CMOS or CCD sensors capture high-quality images of products on the manufacturing line. These sensors convert scenes into digital images, providing the foundation for all subsequent image processing. Lighting setup plays a crucial role in this step. Proper illumination, such as ring lighting or backlighting, enhances contrast and reveals defects that might otherwise remain hidden. The positioning detector senses when an object enters the camera’s field of view and triggers the imaging component. The camera and lighting system synchronize to ensure clear, consistent images for inspection. Advanced options like 3D cameras and Time-of-Flight sensors add depth information, supporting complex inspection tasks.

Preprocessing Steps

After image acquisition, preprocessing prepares images for analysis. Image pre-processing includes resizing images to standard dimensions, normalizing pixel values, and converting color images to grayscale when needed. Noise reduction filters, such as Gaussian or median filters, remove unwanted artifacts. Adjusting brightness and contrast makes features more visible, which is essential for accurate defect detection and inspection. Preprocessing also involves segmentation to isolate regions of interest and data augmentation to increase dataset diversity. These steps improve image quality and ensure that image processing and analysis deliver reliable results. Proper preprocessing stabilizes model training and enhances the accuracy of image classification, object detection, and segmentation.

Note: Careful preprocessing prevents the loss of important details, supporting precise inspection and defect detection in manufacturing.

Transformation and Analysis

Once preprocessing is complete, image processing techniques transform and analyze the data. Systems apply geometric and photometric transformations to align and enhance images. Feature extraction methods identify shapes, textures, and colors relevant to inspection. Image segmentation isolates defects or specific parts for closer examination. Machine vision systems use object detection and image classification algorithms to recognize and categorize defects. Advanced analysis tools, such as convolutional neural networks, support real-time inspection and classification. The results guide automated actions, such as assembly line adjustments or quality control decisions. Integration with manufacturing execution systems enables real-time monitoring, predictive maintenance, and improved traceability. This workflow ensures that inspection, defect detection, and image classification remain accurate and efficient throughout the manufacturing process.

| Step | Description |

|---|---|

| Image Acquisition | Cameras and lighting capture digital images for inspection. |

| Preprocessing | Image pre-processing enhances quality and prepares data for analysis. |

| Transformation | Image processing aligns and improves images for further analysis. |

| Analysis | Systems perform object detection, segmentation, and classification. |

| Output | Results drive automated inspection and manufacturing decisions. |

Getting Started

Tools and Libraries

Many open-source libraries help beginners explore image transformation in machine vision. These libraries support a wide range of computer vision tasks, from simple filtering to advanced object detection. The table below highlights some of the most popular options:

| Library | Best For | Key Features |

|---|---|---|

| OpenCV | General-purpose computer vision | Fast performance, many image/video tools, deep learning support, works with many languages |

| PyTorch | Research and deep learning | Dynamic graphs, GPU acceleration, pre-trained models, image augmentations |

| scikit-image | Image processing with ML | Works with scikit-learn, supports multi-dimensional images, fast operations |

| TensorFlow | Deep learning-based vision tasks | Scalable, pre-trained models, supports mobile and cloud, easy custom training |

| Detectron2 | Object detection and segmentation | Modular, state-of-the-art models, efficient training and inference |

| SimpleCV | Beginners and rapid prototyping | Easy Python framework for quick experiments |

For those who prefer graphical tools, ON1 Photo RAW, Adobe Lightroom, and Affinity Photo offer user-friendly interfaces and strong editing features. These tools provide batch processing, AI-powered enhancements, and step-by-step tutorials, making them ideal for beginners in machine vision applications.

Simple Project Example

A simple project helps beginners understand how machine vision works in real-world applications. For example, a user can build a basic object detection system using OpenCV and Python. The project captures images from a webcam, applies geometric transformations, and highlights objects with bounding boxes. This process introduces key computer vision concepts like filtering, segmentation, and classification. By experimenting with different transformations, users see how changes affect detection accuracy. Many applications in manufacturing and quality control use similar workflows to inspect products and sort items.

import cv2

# Capture video from webcam

cap = cv2.VideoCapture(0)

while True:

ret, frame = cap.read()

# Convert to grayscale

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

# Apply Gaussian blur

blurred = cv2.GaussianBlur(gray, (5, 5), 0)

# Show the result

cv2.imshow('Blurred Image', blurred)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

cv2.destroyAllWindows()

This code demonstrates basic image transformation steps used in many computer vision applications.

Tips for Beginners

Beginners should start with simple projects and gradually explore more advanced machine vision applications. They can use libraries like OpenCV or SimpleCV for hands-on practice. Reading official documentation and following online tutorials helps build confidence. Many tools offer free resources and community support. Beginners should focus on understanding basic concepts such as image filtering, segmentation, and classification before moving to deep learning. Consistent practice and experimentation lead to better results in computer vision and machine vision projects.

Tip: Save project code and results to track progress and identify areas for improvement.

Mastering image transformation helps machine vision systems achieve high accuracy in manufacturing and quality control. Beginners benefit from starting with simple projects using tools like OpenCV or scikit-image.

- Image segmentation divides images into meaningful parts, making object analysis easier.

- Beginners should try different segmentation types and learn both traditional and deep learning methods.

- Evaluation metrics such as Dice Similarity Coefficient and F1-score help measure progress.

Next steps include:

- Building a strong foundation in computer vision theory.

- Exploring practical applications like object tracking.

- Joining free online bootcamps for hands-on experience.

Experimenting with small projects builds confidence and prepares learners for real-world machine vision challenges.

FAQ

What is the main goal of image transformation in machine vision?

Image transformation prepares images for analysis. The system aligns, enhances, and cleans images. This process helps machine vision systems detect objects and defects with higher accuracy.

Which industries use image transformation the most?

Manufacturing, automotive, electronics, and food processing industries use image transformation. These sectors rely on machine vision for inspection, sorting, and quality control.

Can beginners use open-source tools for image processing?

Yes. Beginners can use open-source libraries like OpenCV and scikit-image. These tools offer tutorials, sample code, and active communities for support.

How does lighting affect image transformation results?

Lighting changes image quality. Proper lighting reveals important features and defects. Poor lighting can hide details or create shadows, making analysis harder.

See Also

Understanding How Machine Vision Systems Process Images

Complete Overview Of Machine Vision For Industrial Automation

Top Libraries Used In Advanced Image Processing For Vision