Every manufactured part that ships with a defect carries a compounding cost: the direct cost of the defective unit, the downstream warranty claim, and — in safety-critical industries like automotive or battery manufacturing — the potential cost of a field failure that cannot be undone by any amount of corrective action. The traditional response has been to place inspectors at the end of the line or deploy rule-based machine vision systems that check for anticipated defects against preset thresholds.

Both approaches share a common failure mode: they can only detect what they were designed to find, and they cannot keep pace with the speed, variety, and complexity of modern production. AI visual inspection replaces fixed rules and human judgment with a deep learning model trained to recognize defect patterns the way an expert does — by learning from examples rather than explicit rules. This article defines what AI visual inspection is, how the technology works at a system level, and where it is being deployed to achieve inspection accuracy that surpasses both approaches.

Key Takeaways

- AI visual inspection uses trained deep learning models to detect, classify, and locate defects in manufactured parts at production line speed, without manually programmed rules or human judgment at the point of inspection.

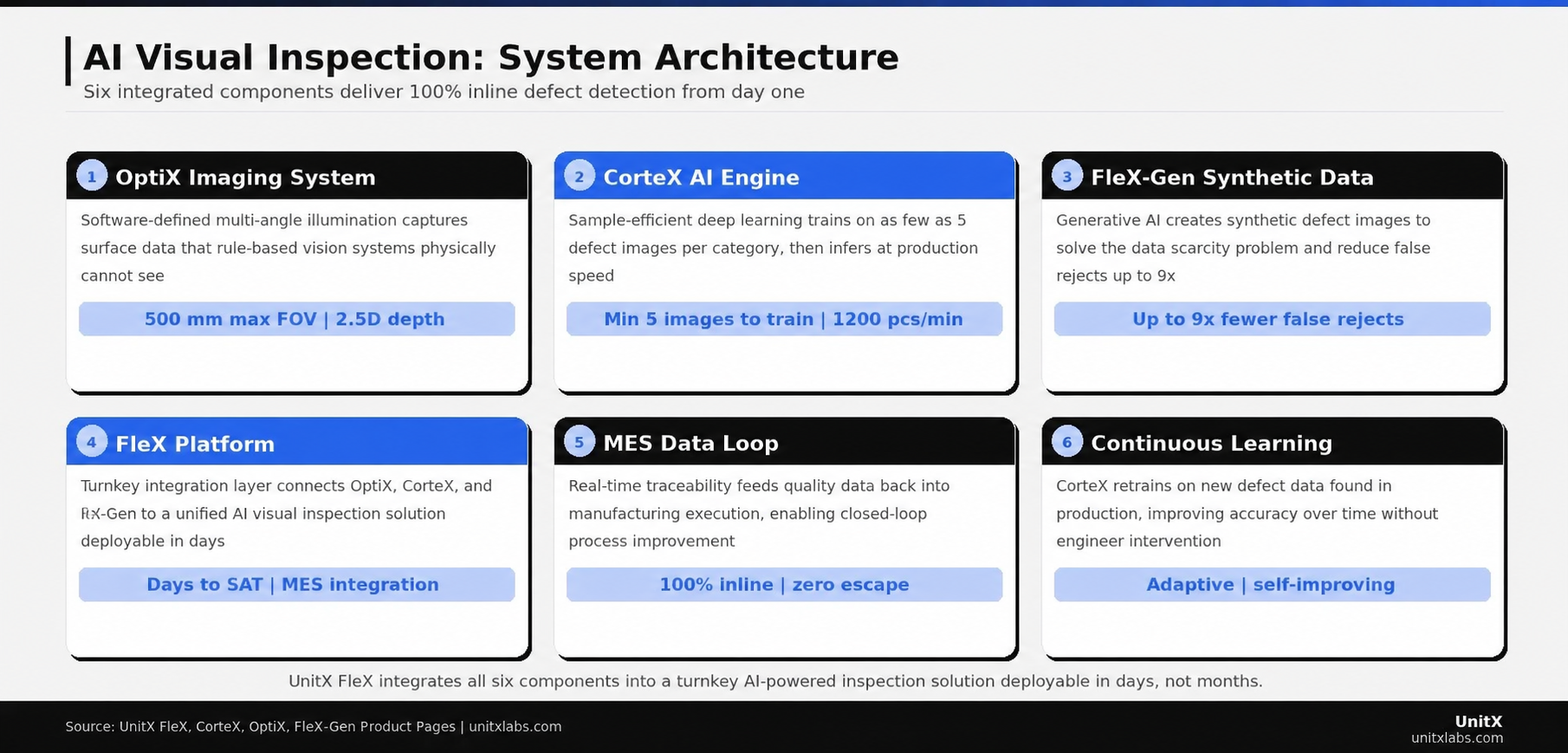

- The technology combines three hardware and software layers: an imaging system that captures richer surface data than conventional cameras, a deep learning inference engine that classifies defects at the pixel level, and a data integration layer that feeds results to MES in real time.

- Sample efficiency is a critical differentiator in modern AI inspection — systems like CorteX can train on as few as 5 defect images per category, addressing the data scarcity problem that previously limited AI adoption in manufacturing quality control.

- False acceptance rate (FA) and false rejection rate (FR) are the definitive performance metrics, not overall accuracy — world-class AI inspection systems target FA = 0% with FR ≤ 1%, meaning zero defect escapes and minimal overkill.

The Limitations That AI Visual Inspection Solves

Why Manual Inspection Has a Hard Accuracy Ceiling

Manual inspection — human QC inspectors examining parts visually or under magnification — has a theoretical accuracy ceiling that falls well below the zero-escape standard required by Tier 1 automotive, EV battery, and semiconductor customers. Research conducted by Sandia National Laboratories, published in Human Factors, documented that precision manufacturing inspectors correctly rejected an average of 85% of defective parts under controlled conditions. The study noted that this figure represents the industry ceiling, not a floor, and was achieved at the cost of a 35% false rejection rate (PubMed — Visual Inspection Reliability for Precision Manufactured Parts).

The deeper problem is that manual inspection does not scale with production volume. Increasing throughput requires a proportional increase in inspector headcount, but the accuracy ceiling does not improve — it compounds. An inspection team of ten people with 80% individual accuracy reviewing the same part does not produce 100% detection; instead, it produces correlated errors that cluster around the same borderline defect profiles that individual inspectors find difficult.

Why Rule-Based Machine Vision Fails on Complex Surfaces

Rule-based machine vision replaced manual inspection in high-volume, low-mix production lines where inspection tasks are simple and stable. A pixel intensity threshold works reliably for a matte black surface under consistent illumination. It fails when the surface is reflective, when product mix changes, when illumination drifts with temperature, or when new defect types appear that were not anticipated in the original rule set.

The maintenance burden of rule-based systems is a second failure mode. Every new product variant, change in surface treatment, or shift in incoming material quality requires a vision specialist to manually re-tune the rules — a process that typically involves production downtime and specialized engineering time. The International Federation of Robotics reports that machine vision system maintenance and reprogramming costs account for 35–50% of the total cost of ownership for conventional vision systems over a five-year production life (IFR — World Robotics and Vision System Cost of Ownership).

What AI Visual Inspection Is

The Technical Definition

AI visual inspection is an inspection methodology in which a trained deep learning model analyzes image data from a production line to detect, classify, and locate defects — without explicit rules, threshold-based logic, or human judgment at the point of inspection. The model learns defect patterns from labeled training images and applies that learning to new, unseen parts in real time.

The critical word in that definition is “trained.” Unlike a rule-based system that encodes explicit logic (“flag pixels brighter than X in region Y”), a deep learning model encodes statistical patterns learned from examples. It can recognize a scratch even if the appears at an unexpected location, orientation, or surface finish — because it has learned the underlying visual signature of a scratch rather on a hardcoded rule.

In an AI-powered inspection system like UnitX FleX, this deep learning inference is performed by CorteX — a sample-efficient training and high-speed inference engine that runs deep learning segmentation at up to 100 MP/s. Deep learning segmentation, as opposed to simpler bounding-box detection, identifies the exact pixel-level boundary of each defect, enabling measurement of defect area, shape, and location — all of which feed into the pass/fail decision and the MES quality record.

Performance Metrics That Actually Matter

AI visual inspection performance is defined by two metrics that map directly to business outcomes. False Acceptance Rate (FA) is the fraction of defective parts that pass inspection undetected — these become escapes, warranty claims, and field failures. The target for world-class AI inspection is FA = 0%. False Rejection Rate (FR) is the fraction of good parts incorrectly rejected — these become scrap or rework costs and reduce effective line yield. Typical targets for AI inspection systems are FR ≤ 1% for standard applications, with tighter specifications in high-value assemblies.

These two metrics trade off against each other in any inspection system. Human inspection performs poorly on both — high FA due to imperfect attention, and high FR because inspectors err on the side of rejection when uncertain. Rule-based systems can achieve low FA for known defect types but suffer from high FR due to false alarms triggered by surface variation. AI visual inspection, with sufficient training data and a capable inference architecture, can achieve low FA and low FR simultaneously — which is why its adoption is accelerating across precision manufacturing sectors.

How an AI Visual Inspection System Works

Layer 1 — The Imaging System

The quality of AI inspection output is bounded by the quality of the image input. A deep learning model cannot detect a defect that does not appear in the image data, regardless of how capable the model is. This is why the imaging layer — the physical system that captures light from the part surface — is as critical as the AI layer to overall inspection performance.

Advanced AI visual inspection systems use software-defined imaging to capture multi-angle image data that encodes surface information far beyond what a conventional single-angle camera can provide. OptiX, UnitX’s AI-powered imaging system, uses 32 independent illumination channels to produce image data that captures angular, depth, and reflectance information within each inspection frame. The result is an image that carries far more discriminative information for the CorteX deep learning model — which is why CorteX achieves superhuman detection accuracy on reflective and complex surfaces where single-angle imaging produces ambiguous data.

The maximum FOV of OptiX reaches 500 mm, accommodating large automotive assemblies and battery modules, while the 50 MP sensor resolution enables detection of defects at sub-pixel scale. Fly capture at 1 m/s line speed ensures 100% inline coverage without compromising throughput. For more detail, the OptiX product page documents the full imaging system specification.

Layer 2 — The AI Inference Engine

The inference engine consists of the deep learning model and the hardware it runs on. In CorteX, the architecture is built around deep learning segmentation — a method that classifies every pixel in the image, producing a defect map rather than a simple bounding box. This pixel-level output enables precise measurement of defect geometry and allows the system to distinguish between different sizes (e.g., a 0.1 mm² scratch within specification vs. a 0.5 mm² scratch that is not), even when they appear similar at lower resolution.

Sample efficiency — the ability to train an effective model using a small number of labeled images — is a key differentiator in modern AI inspection systems. CorteX achieves reliable inference from as few as 5 images per defect category. This is critical because rare defect types, by definition, do not generate large training datasets through production sampling. The CorteX system documentation covers the training architecture and sample efficiency mechanisms in detail.

Layer 3 — Synthetic Data Augmentation

Even a sample-efficient model benefits from diverse training data. FleX-Gen, UnitX’s generative AI tool, creates synthetic defect images that are physically consistent with OptiX imaging characteristics — meaning they can be directly added to the training dataset without domain shift issues that would degrade model performance. FleX-Gen augmentation has been documented to reduce false rejection rates by up to 9x in production deployments by ensuring that the model is trained on a representative distribution of defect appearances rather than naturally skewed distribution from production sampling.

The combination of small real labeled datasets augmented by FleX-Gen synthetic data solves the data scarcity problem that was the primary barrier to AI inspection adoption in manufacturing for the first decade of deep learning’s industrial application. Explore this capability further at the FleX-Gen product page.

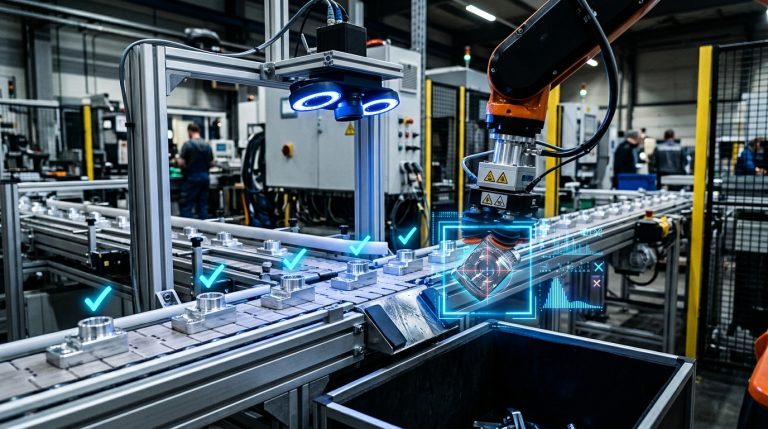

A complete AI visual inspection system integrates six components: the imaging system that captures surface data, the AI inference engine, synthetic data augmentation, the integration platform, the MES data loop, and continuous model improvement — each reinforcing inspection accuracy over time.

Layer 4 — MES Integration and Data Traceability

The output of an AI inspection system is only as valuable as the infrastructure that captures and distributes it. World-class AI inspection deployments integrate directly with the plant’s Manufacturing Execution System (MES), transmitting the pass/fail decisions, defect classifications, defect location coordinates, and part serial numbers for every inspection event in real time.

This real-time data traceability enables closed-loop quality control.Process engineering team can observe defect rate trends, identify process drift before escapes accumulate, and trigger corrective actions based on live inspection data rather than delayed warranty feedback. The U.S. Department of Energy’s Advanced Manufacturing Office has documented that real-time quality data integration can reduce scrap rates by 15–40% in precision manufacturing environments by enabling earlier intervention (U.S. Department of Energy — Advanced Manufacturing Office Research).

AI Visual Inspection Applications by Industry

Battery and EV Manufacturing

Battery cell and module manufacturing represent one of the most demanding AI inspection environments in precision manufacturing. Electrode foil surfaces are highly reflective and require inspection for pinholes, contamination particles, and edge defects at the micron scale. Tab welding inspection must distinguish between acceptable weld geometry and subtle structural defects that could cause cell failure under cycling stress.

AI visual inspection, powered by OptiX’s multi-angle illumination and CorteX’s deep learning segmentation, addresses all three of these challenges in a single inspection station. The system achieves 100% inline coverage at battery cell production speeds, replacing the combination of human spot-check inspection and occasional post-process destructive testing that currently serves as the industry baseline. Review the battery industry solution for a full description of the inspection application stack.

Automotive Components

Automotive Tier 1 suppliers face inspection requirements across an extraordinarily wide range of surface types: machined aluminum castings, chrome-plated trim, painted body panels, rubber seals, and glass — sometimes within a single product family. AI visual inspection deployed on a FleX platform with OptiX imaging handles this surface variety through software-defined illumination profiles, switching between product-specific optical configurations in minutes rather than days.

The zero-escape quality standard demanded by OEM customers (FA = 0%) is achievable with AI inspection, where it is not attainable with either manual inspection or rule-based vision systems for complex surface types. UnitX serves the top 5 Tier 1 automotive suppliers globally, making the automotive sector the largest current deployment base for AI-powered inspection. See relevant case studies on the case studies page.

Semiconductor Packaging

Semiconductor back-end inspection — such as solder joint inspection, wire bond integrity, and substrate surface quality — requires both high spatial resolution and the ability to distinguish between dozens of defect classes that may appear visually similar under conventional illumination. AI visual inspection with deep learning segmentation provides the classification granularity needed to reliably separate void-containing solder joints from acceptable joints, without the high false rejection rates that can negatively impact yield in high-value semiconductor assembly (IEEE — Explainable AI for Advanced IC Packaging Inspection).

The semiconductor inspection solution outlines the specific configurations and performance metrics documented across package-type and substrate inspections.

| Metric | Manual Inspection | Rule-Based Vision | AI Visual Inspection |

| FA (False Accept) | 15–30% | 5–15% on new defect types | Target 0% |

| FR (False Rejects) | High (subjective) | High on surface variation | ≤ 1% target |

| Coverage | Sample-based | 100% inline (simple surfaces) | 100% inline at line speed |

| New Product Reconfiguration | Retrain inspectors | Days (hardware + rule rewrite) | Minutes (software profile) |

| Traceability | Manual log (incomplete) | Pass/fail only | Full defect map, per-part record |

What the Deployment Path Looks Like

From First Sample to Production SAT

A well-designed AI visual inspection deployment begins with a sample inspection run using actual production parts — both good parts and known defective parts — to validate detection performance before any production commitment. This sample run establishes the baseline FA and FR performance against the agreed specification and identifies any defect classes that require additional training data or FleX-Gen synthetic augmentation before the system meets the production target.

Once the sample run confirms specification compliance, production deployment proceeds through mechanical installation, MES integration, and operator training. The UnitX FleX platform targets Site Acceptance Testing (SAT) completion within days of installation — a significant reduction compared to the weeks or months required by conventional machine vision integration projects. This rapid SAT timeline is possible because the AI model is pre-trained on the sample run data, and the integration layer is pre-configured to the plant’s MES protocol before physical installation begins.

Post-deployment, the system runs autonomously with continuous model improvement. New defect types encountered in production are flagged for labeling, and CorteX retrains on the updated dataset at defined intervals. The inspection system’s accuracy improves with production experience rather than degrading due to surface wear and product variation — the inverse of what happens with rule-based systems. Review documented deployment outcomes in the UnitX case study library.

Common Questions

How many defect images do I need to start training an AI inspection model?

CorteX is designed to begin effective inference with as few as 5 labeled defect images per defect category. For rare defect types where even 5 real examples are difficult to collect, FleX-Gen synthetic augmentation generates physically realistic defect images that bring the effective training dataset to the minimum required threshold. This low data requirement makes AI inspection deployment practical even in facilities where defect rates are low and historically labeled datasets do not exist.

What is the difference between AI visual inspection and conventional machine vision?

Conventional machine vision uses manually programmed rules and thresholds to determine whether a pixel pattern indicates a defect. AI visual inspection uses a trained deep learning model that learns defect patterns from labeled examples. The practical difference is that conventional vision requires a specialist to explicitly anticipate and program every defect type, while AI inspection generalizes from examples — meaning it can detect defect variations and new defect types that were never explicitly programmed, within the scope of the defect classes it was trained on.

Can AI visual inspection handle multiple product types on the same line?

Yes. The UnitX FleX platform manages separate AI models and optical configuration profiles for each product variant. When the line switches product types, the appropriate model and OptiX illumination profile are loaded automatically. The reconfiguration happens in software — no physical hardware adjustment is required — which is why FleX supports high-mix production schedules without the changeover downtime associated with conventional machine vision systems.

How does AI visual inspection integrate with our existing MES?

FleX includes standard MES integration protocols that transmit inspection results, defect classification, defect location, and part identifiers for every inspection event in real time. The specific integration format — OPC-UA, REST API, database write, or custom protocol — is configured during the pre-installation phase. No custom development is required for major MES platforms commonly deployed in automotive, battery, and semiconductor manufacturing environments.