If you’ve been in manufacturing QA long enough, you’ve probably encountered the pitch before: “Our machine vision system will catch every defect.” And for a while — on a single SKU, with stable lighting, inspecting one specific defect type — maybe it did. Then the product changed. Or a new surface finish came in. Or you added a second shift, and suddenly the false rejection rate climbed to 8% and operators started bypassing the system entirely.

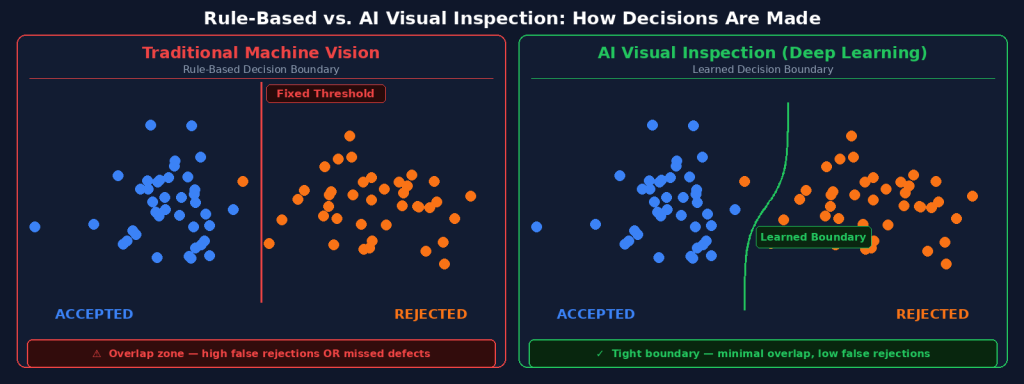

This isn’t a failure of your team. It’s a fundamental limitation of how traditional machine vision works. And understanding that limitation is the starting point for understanding why AI-based visual inspection produces genuinely different outcomes — not as a marketing claim, but as an engineering reality.

Key Takeaways

- Traditional machine vision uses hand-coded rules; AI visual inspection uses deep learning that learns from examples, making it dramatically more robust to real-world variation.

- False Rejection (overkill) is the silent killer of traditional inspection ROI — AI pixel-level segmentation enables precise threshold tuning that reduces false rejects without raising escape rates.

- Setup time and adaptability are where the gap is most visible: rule-based systems require reprogramming for every product change; AI models retrain in minutes from a handful of new images.

- For high-variance, complex-surface defects, AI is not just better — traditional machine vision is structurally incapable of handling them reliably.

How Traditional Machine Vision Actually Works

The Rule-Based Architecture

Traditional machine vision systems are built on explicit, manually written rules. An engineer examines defect samples, defines what a “bad” pixel pattern looks like (based on intensity thresholds, edge gradients, blob parameters, or geometric measurements), and the system then checks every new image against those rules. If a feature exceeds a defined threshold, it triggers a reject signal.

This architecture has real strengths. It is deterministic, highly interpretable, and extremely fast — rule evaluation takes microseconds, which matters when you’re running a high-speed stamping line. For tasks like dimensional gauging (is this hole within ±0.1mm of spec?) or simple presence/absence checks (is the label here?), rule-based systems remain excellent tools.

Where Rules Break Down

The problem arrives the moment defects become variable in appearance. A scratch on a machined aluminum part looks different depending on its depth, direction, ambient humidity, and which of the three lighting angles happened to illuminate it most strongly in that frame. Writing a single rule that catches the 0.3mm scratch without also rejecting every normal surface highlight is, in practice, nearly impossible to sustain across production conditions.

The result: engineers raise thresholds to reduce false rejects, which inevitably allows some real defects to escape. Or they lower thresholds to catch everything, which generates so many false rejects that operators lose confidence in the system and begin overriding it manually. Either path defeats the purpose of automated inspection.

How AI Visual Inspection Works Differently

Learning From Examples, Not Rules

AI visual inspection systems — specifically those using deep learning with convolutional neural networks (CNNs) — don’t use rules at all. Instead, they learn directly from labeled image examples. Show the model 50 images of good parts and 30 images of scratched parts, and the neural network learns to distinguish the underlying pattern: not a specific pixel threshold, but the structural signature of a scratch across all the ways it might appear.

This learning-based approach has a profound practical consequence: the model generalizes. It can correctly classify a scratch it has never seen before, because it has learned the concept of a scratch — the subtle texture disruption, the elongated shadow, the slight reflectance variation — not a hardcoded rule about pixel brightness at a specific coordinate.

Pixel-Level Segmentation vs. Bounding Box Detection

Not all AI inspection is equal. There is a significant difference between systems that draw a bounding box around a region of interest (“there’s probably a defect somewhere in this 50×50 pixel area”) and systems that perform pixel-level semantic segmentation (“this specific set of 847 pixels is a scratch, and this other set of 212 pixels is a cosmetic mark that does not require rejection”).

Pixel-level segmentation is the approach that enables precise threshold tuning. When you know exactly which pixels constitute a defect — its area, its position relative to a functional zone, its depth signature from 2.5D imaging — you can make intelligent accept/reject decisions rather than binary ones. A 0.3mm scratch in a cosmetic area might be acceptable. The same scratch crossing a sealing surface is not. Rule-based systems cannot make this distinction. Pixel-level AI can.

Comparing the Two Approaches: A Practical Framework

| Dimension | Traditional Machine Vision | AI Visual Inspection |

|---|---|---|

| Decision mechanism | Hand-coded rules (thresholds, blob analysis) | Deep learning from labeled image examples |

| Setup for new product | Engineer must rewrite/retune rules (days–weeks) | Retrain model on new images (minutes–hours) |

| Handles surface variation | Poor — rule drift causes false rejects or escapes | Robust — model learns normal variation vs. defects |

| Defect granularity | Binary or bounding-box region | Pixel-level segmentation with area/depth data |

| False Rejection control | Manual threshold tuning; trade-off with escape rate | Precise threshold tuning per defect type and zone |

| High-variance defects | Structurally challenging; often requires multiple cameras | Handles naturally via learned feature representations |

| Expertise required | Vision engineer with deep system knowledge | QA team can train models; no AI background required |

| Best for | Dimensional gauging, presence/absence, stable single SKU | Surface defects, multi-SKU lines, high-mix production |

The False Rejection Problem: Why It Matters More Than You Think

Counting the Real Cost of Overkill

False Rejection — also called “overkill” — is when a system rejects a perfectly good part. In traditional machine vision environments, FR rates of 2–8% are common on challenging surface types. This seems like a quality conservatism, but the downstream costs are significant: every false reject requires manual re-inspection, which means the labor automation was supposed to eliminate flows back in. More critically, when operators see high false reject rates, they begin to distrust the system and manually override it — at which point the automation provides neither the efficiency gain nor the quality protection it was deployed for.

How AI Enables Precise Threshold Control

Because AI systems with pixel-level segmentation know the exact size, shape, and location of every candidate defect, they enable a new kind of rejection logic. Rather than asking “is there a suspicious pattern anywhere in this region?” — which forces conservative thresholds — they can ask “is there a scratch longer than X mm located within Y mm of the sealing surface?” This specificity dramatically reduces false rejects on cosmetic features without compromising detection on critical ones.

UnitX’s CorteX platform is built around this architecture. Its pixel-level semantic segmentation output feeds directly into a configurable acceptance logic engine, letting QA engineers define precise rules about what actually constitutes a rejectable defect — not just “anomaly detected,” but “this specific class of anomaly, in this zone, above this size threshold.” The result is an inspection regime that matches how experienced human inspectors actually think, at production speeds.

When Traditional Machine Vision Is Still the Right Tool

This article isn’t arguing that traditional machine vision should be replaced everywhere. For specific tasks, it remains superior:

Dimensional measurement: If you need to verify that a shaft diameter is 12.000 ± 0.005mm, a calibrated machine vision gauging system with sub-pixel edge detection is still the most precise, most auditable approach. Deep learning is not optimized for high-precision metrology.

Simple presence/absence checks: Is the O-ring installed? Is the barcode present? For these binary, geometrically simple tasks, rule-based systems are fast, reliable, and unnecessarily over-engineered to replace with AI.

Highly constrained, single-SKU environments: If you run the exact same part, on the exact same line, with locked-down lighting, and the defect types are well-defined and geometrically simple, a well-tuned rule-based system may outperform AI — because AI’s advantage (generalization) isn’t needed.

The key word in all of these cases is “stable.” When the environment is stable, rules work. When the environment involves natural variation — surface texture changes, multi-SKU flexibility, subtle or complex defect types — AI’s learned representations produce better outcomes.

The Imaging Foundation: Why AI Alone Isn’t Enough

One detail that often gets lost in AI-vs-rules comparisons: the AI model is only as good as the images it receives. A deep learning system trained on poor images will produce poor results. This is why OptiX’s software-defined imaging hardware is the foundation of the UnitX FleX platform rather than an afterthought.

With 32 independently controllable light channels, dynamic lighting scheme switching at 50 configurations per second, and 2.5D depth imaging that captures geometric surface features invisible to flat 2D sensors, OptiX consistently delivers images that give CorteX’s AI the information it needs to distinguish real defects from surface noise. The result is a system where the imaging and AI layers are designed together — not assembled from generic components.

“The goal isn’t to have the most sophisticated AI. The goal is to have zero critical escapes and the minimum acceptable false rejection rate — sustainably, across every production shift, every SKU, and every lighting condition.” — UnitX Applications Engineering Team

Frequently Asked Questions

Can I add AI to my existing machine vision cameras?

In some cases, yes — software-only AI solutions can run inference on images captured by existing hardware. However, if your current lighting setup is inadequate for the defects you’re trying to detect, better algorithms won’t fix a photon problem. Always assess whether your image quality is sufficient before deciding the bottleneck is in the software layer.

How many defect images do I need to train an AI model?

This depends heavily on the AI architecture. Conventional deep learning typically requires thousands of labeled examples to generalize well. Small-sample AI approaches — like the architecture used in UnitX CorteX — can produce accurate models from as few as 5 images per defect type, using few-shot learning and, where real defect samples are scarce, synthetic data generation from GenX.

Will AI visual inspection require retraining every time I introduce a new product?

Yes, but the effort is different in scale. Retraining an AI model for a new SKU typically takes minutes to hours from a handful of new images — versus the days or weeks a vision engineer might spend rebuilding rule sets for a traditional system. The key factor is whether your AI platform includes an efficient model management workflow, like CorteX Train’s Central Management System, which tracks model versions and deployment status across multiple production lines.

Is AI visual inspection appropriate for safety-critical parts?

AI inspection is increasingly deployed on safety-critical components — including automotive structural parts, EV battery cells, and medical devices — precisely because of its higher detection accuracy compared to manual inspection and traditional machine vision on complex defect types. Any deployment in regulated environments should include a documented validation process (IQ/OQ/PQ protocol), capability studies, and ongoing performance monitoring. The technology supports this rigor; the process architecture requires explicit design.

→ See how UnitX’s CorteX AI platform handles pixel-level defect classification and threshold control across real production environments.

→ Ready to evaluate AI inspection for your specific part types? Talk to UnitX experts — we’ll review your inspection challenge and tell you honestly whether AI is the right approach.